Streamlit Caching

Streamlit runs your script from top to bottom whenever you interact with the app.

This execution model makes development super easy. But it comes with two major challenges:

1. Long-running functions run again and again, which slows down your app.

2. Objects get recreated again and again, which makes it hard to persist them across reruns or sessions.

Streamlit provides a simple way to solve these challenges: Caching.

You can use the @st.cache_data and @st.cache_resource decorators to cache the results of functions and the state of objects, respectively.

Caching stores the results of slow function ca;;s, so they only need to run once.

This makes your app faster and helps with persisting state across reruns and sessions.

Cached values are available to all users of your app.

If you need to save results that should be accessible within a session, use Session State.

Need a Streamlit developer? Click Here

Basic Example

lets run a function without caching and afterwards we run a function with caching

#Without Caching

import streamlit as st

import time

def without_caching():

time.sleep(5) # Simulate a long-running computation

return "Result without caching"

st.write("Running without caching...")

st.write(without_caching()) # This will take 5 seconds every time you interact with the app

#With Caching

import streamlit as st

import time

@st.cache_data

def with_caching():

time.sleep(5) # Simulate a long-running computation

return "Result with caching"

st.write("Running with caching...")

st.write(with_caching()) # This will take 5 seconds only the first time you interact with the app

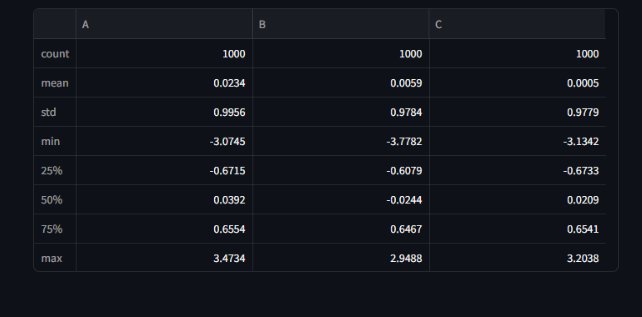

DataFrame transformations.

You can use caching to store the results of expensive DataFrame transformations.

This is especially useful when working with large datasets.

import pandas as pd

import numpy as np

@st.cache_data

def load_data():

# Simulate loading a large dataset

time.sleep(5)

data = pd.DataFrame(np.random.randn(1000, 3), columns=['A', 'B', 'C'])

return data

data = load_data()

st.write(data.describe()) # This will take 5 seconds only the first time you interact

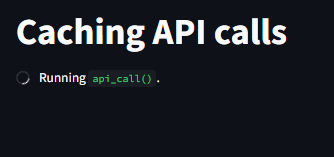

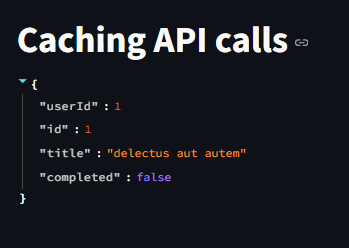

API calls

Its usually best to cache API calls. Doing so avoids rate limits

import requests

st.title("Caching API calls")

@st.cache_data

def api_call():

response = requests.get("https://jsonplaceholder.typicode.com/todos/1")

return response.json()

st.write(api_call()) # This will take time only the first time you interact with the app

Using st.cache_resource is very similar to using st.cache_data. But there are a few important differences in behavior:

st.cache_resource does not create a copy of the cached return value but instead stores the object itself in the cache. All mutations on the function’s return value directly affect the object in the cache, so you must ensure that mutations from multiple sessions do not cause problems. In short, the return value must be thread-safe.

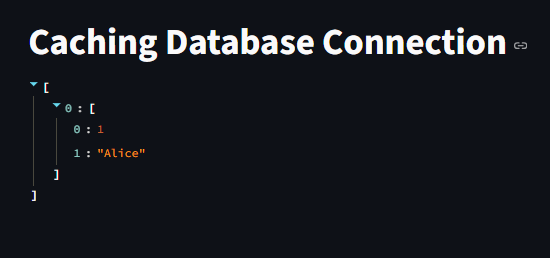

Using st.cache_resource

You can use @st.cache_resource to cache objects that are expensive to create, such as database connections or machine learning models.

This is useful for persisting state across sessions and users.

Here’s an example of caching a database connection:

import sqlite3

import streamlit as st

import time

@st.cache_resource

def get_db_connection():

time.sleep(5) # Simulate a long-running connection setup

conn = sqlite3.connect(':memory:')

return conn

st.title("Caching Database Connection")

conn = get_db_connection() # This will take 5 seconds only the first time you interact with the app

cursor = conn.cursor()

cursor.execute("CREATE TABLE IF NOT EXISTS test (id INTEGER PRIMARY KEY, name TEXT)")

cursor.execute("INSERT INTO test (name) VALUES ('Alice')")

conn.commit()

cursor.execute("SELECT * FROM test")

st.write(cursor.fetchall()) # Display the data from the database

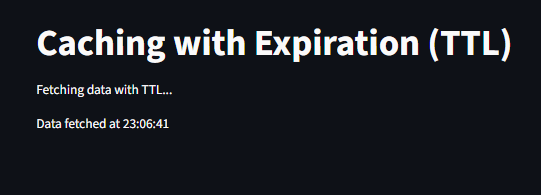

Caching with Expiration(TTL)

You can set a time-to-live (TTL) for cached data using the ttl parameter in the @st.cache_data decorator.

This is useful for data that changes frequently, such as API responses or real-time data.

You can automatically refresh cache after a certain time using ttl (time-to-live) parameter.

import streamlit as st

import time

st.title("Caching with Expiration (TTL)")

@st.cache_data(ttl=60) # Cache expires after 60 seconds

def fetch_data():

time.sleep(5)

return "Data fetched at " + time.strftime("%H:%M:%S")

st.write("Fetching data with TTL...")

st.write(fetch_data()) # This will take 5 seconds only the first time you interact

Machine Learning Model

import streamlit as st

from sklearn.ensemble import RandomForestClassifier

@st.cache_resource

def load_model():

model = RandomForestClassifier()

return model

model = load_model()

st.write("✅ Model loaded")

#You can now use the model for predictions without reloading it every time.

Cache Invalidation

You can invalidate the cache manually using the st.cache_data.clear() or st.cache_resource.clear() methods.

This is useful when you know that the underlying data has changed and you want to refresh the cache.

import streamlit as st

st.title("Cache Invalidation Example")

if st.button("Clear Cache"):

st.cache_data.clear()

st.cache_resource.clear()

st.write("Cache cleared!")

Debugging Cache Issues

If you encounter issues with caching, you can use the show_spinner parameter to display a spinner while the function is running.

This can help you identify which functions are being cached and when they are being executed.

import streamlit as st

import time

st.title("Debugging Cache Issues Example")

@st.cache_data(show_spinner=True , ttl=30)

def slow_function():

time.sleep(5)

return "Finished slow function"

st.write(slow_function()) # This will take 2 seconds only the first time you interact with the app

Best Practices

Use st.cache_data for outputs

Use st.cache_resource for persistent resources

Use ttl when data changes frequently

Avoid caching huge objects unless necessary

Clear cache when changing core app logic

Learn more about Streamlit: Click here

Watch videos on Streamlit: